Using AI to Predict Content Performance Before Publishing

Published Apr 20, 2026 | By Admin

Publishing content has traditionally involved a degree of uncertainty. Teams research a topic, create an asset, review it carefully, and launch it with the hope that it will attract attention, support business goals, and perform well with the intended audience. Even with strong editorial processes and historical analytics, there is often still a gap between what teams expect and what actually happens once the content goes live. Some pieces perform far better than expected, while others fail to gain momentum despite significant effort. This unpredictability makes content planning more difficult, especially for organizations producing high volumes of material across many channels.

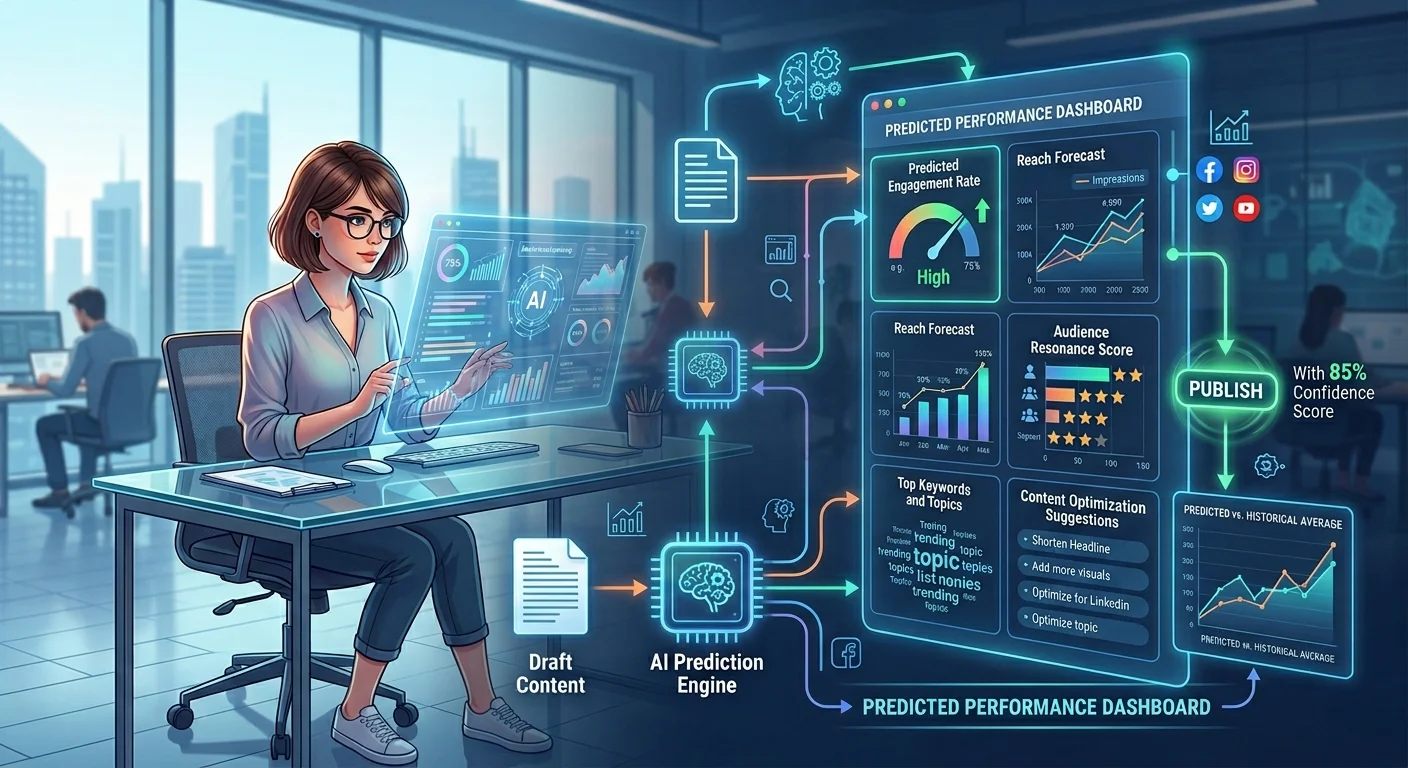

AI is changing that by helping businesses estimate likely performance before publication. Instead of relying only on intuition, past experience, or limited benchmarking, teams can use AI to analyze structured content signals and compare them with patterns from previously published assets. This does not mean AI can predict the future with perfect certainty. What it can do is improve the quality of the decision-making that happens before content is launched. It can highlight likely strengths, identify possible weaknesses, and help teams refine content before it enters the market.

This matters because pre-publication insight creates a major operational advantage. Businesses can prioritize higher-potential content, improve weaker drafts earlier, and allocate editorial resources more intelligently. Rather than waiting for performance data after the fact, teams can use predictive signals to shape content more proactively. In a digital environment where speed and relevance both matter, that ability can significantly improve how content strategies are planned and executed.

Why Predicting Content Performance Matters

Predicting content performance before publishing matters because content creation requires time, budget, coordination, and strategic focus. Every article, landing page, support guide, campaign asset, or product resource competes for editorial attention and production capacity. When businesses do not have a strong way to estimate likely impact, they often treat all content projects as roughly equal until results arrive later. That can lead to wasted effort on low-value assets, delayed investment in stronger opportunities, and a more reactive content strategy overall. This is also where the Benefits of headless CMS over WordPress become more relevant, since a more structured and flexible content foundation can make it easier to analyze, test, and optimize content before and after publishing.

A stronger predictive approach helps businesses make better choices earlier. If teams can identify which topics are likely to resonate, which formats may support stronger engagement, or which structural weaknesses may reduce performance, they can improve the content before it is published or shift resources toward more promising work. This does not eliminate experimentation, but it makes experimentation more informed. Teams can still take creative risks, while also understanding the probable strengths and weaknesses of what they are about to publish.

This is especially valuable in organizations with high publishing volume or multiple stakeholders. Predictive insight helps reduce guesswork in planning, supports better prioritization, and strengthens confidence in editorial decisions. Instead of launching content and only then learning whether it had potential, businesses can start shaping likely outcomes earlier in the process.

Why Traditional Pre-Publication Review Is Not Enough

Traditional pre-publication review remains important, but it is often limited in what it can reveal. Editors and marketers are typically strong at evaluating clarity, tone, accuracy, structure, and alignment with brand standards. They can identify whether a piece reads well, whether it matches audience expectations, and whether the messaging supports business goals. However, even experienced teams can struggle to estimate likely performance at scale because content success depends on many factors working together, including topic relevance, competitive context, structural choices, metadata quality, user intent, and historical audience behavior.

Human review is also influenced by perspective and time pressure. A draft may seem strong because it is well written, but still underperform because the topic is saturated or the format does not match the likely user need. Another asset may look simple, yet have strong performance potential because it aligns well with search demand, journey timing, or audience behavior patterns. These are not always easy judgments to make consistently through editorial review alone, especially across hundreds or thousands of content assets.

AI does not replace editorial judgment in this process. Instead, it complements it by bringing pattern recognition and analytical comparison into the pre-publication stage. That allows businesses to combine human quality control with more data-driven forecasting. The result is a much stronger decision framework than editorial review alone can usually provide.

How AI Uses Historical Patterns to Estimate Future Performance

AI predicts content performance by learning from patterns in previously published content and comparing those patterns with the attributes of new drafts. This usually involves looking at structured data points such as topic, content type, metadata, publication timing, format, audience segment, search behavior, journey role, and historical engagement outcomes. By identifying which combinations of attributes have historically led to stronger or weaker results, AI can estimate the probable performance profile of content that has not yet been published.

This does not mean AI is reading one draft and making a magical prediction in isolation. It is using prior evidence. If certain topic clusters tend to perform well among specific audiences, if some headline structures consistently improve engagement, or if one content format has historically underperformed in a given context, the model can use that information to assess new content against those learned patterns. The prediction is based on comparison and probability rather than certainty.

This type of analysis becomes especially powerful when organizations have a large and well-structured content history. The richer the past dataset, the more useful the pattern recognition becomes. That is why predictive content analysis tends to work best when businesses already manage content in a structured, measurable way. AI needs clean signals in order to produce meaningful estimates.

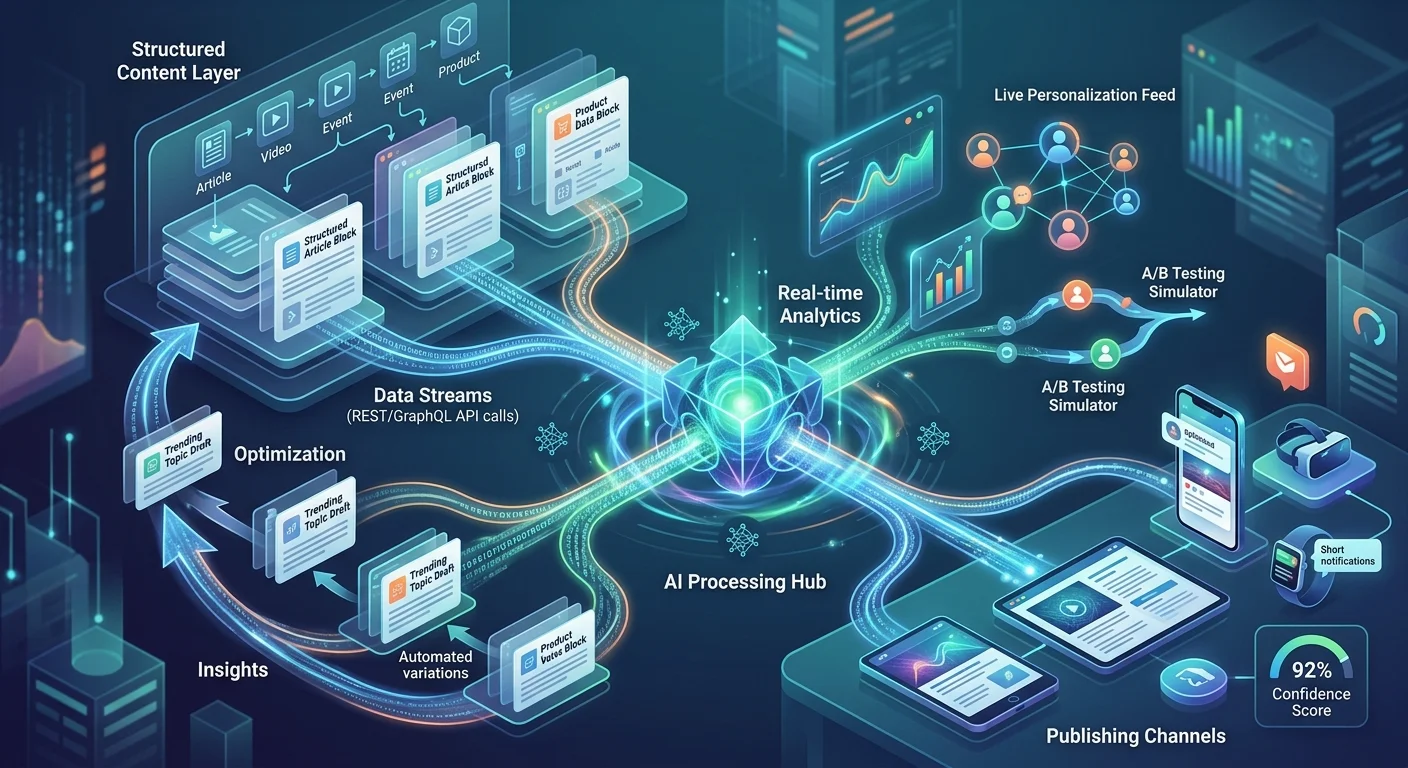

Structured Content Makes Prediction More Reliable

Structured content is essential to reliable prediction because it gives AI clear features to evaluate. If content exists only as large, unstructured page text, the model has to infer too much on its own. It may still identify some patterns in language, but it has less access to the content’s intended role, category, audience, or structural design. In a structured system, however, the model can work with more dependable inputs such as title, summary, topic tags, content type, product associations, taxonomy labels, and journey stage.

This makes prediction far more meaningful. Instead of only asking whether one article looks similar to another, AI can compare much richer content attributes. It can examine whether a draft belongs to a category that historically performs strongly, whether its metadata aligns with successful assets, or whether it appears to fit a structure that has worked well for a similar audience in the past. This allows the model to interpret content more like a business asset rather than as only a block of text.

Structured content also makes performance analysis more consistent across teams and channels. If similar content types follow the same model, AI can compare them more reliably and identify patterns that are less distorted by inconsistent formatting or unclear categorization. That improves not only prediction quality, but also trust in the predictions themselves.

What AI Can Evaluate Before Content Goes Live

AI can evaluate many useful signals before content is published, especially when the content system is structured well. It can assess whether a topic resembles previously successful or weak-performing themes, whether the headline and summary structure align with patterns associated with stronger engagement, and whether the metadata or taxonomy choices fit the likely user intent. It can also detect if the content appears overly similar to existing assets, which may suggest duplication risk or weak differentiation.

In some environments, AI can also examine whether a draft fits the expected standards of a given content type. For example, it may identify that a support article lacks the clarity typically associated with high-performing help resources, or that a demand-generation page is missing elements often present in stronger conversion-supporting assets. It may flag that a piece appears too broad for the search intent it is targeting, or that its structure does not match what users typically engage with in that category.

These evaluations do not need to become rigid rules. Their value lies in helping teams see likely issues earlier. AI can surface signals that would be difficult to detect consistently by hand, especially when many assets are being reviewed at once. That makes pre-publication analysis more practical and more scalable.

Topic Selection Becomes More Strategic

One of the most useful applications of AI prediction is in topic selection. Many businesses create content based on a mix of intuition, stakeholder requests, trend observation, and basic research. While these methods can still be helpful, they often leave uncertainty around which topics are actually worth prioritizing. AI can strengthen this stage by comparing proposed topics with historical performance patterns and signaling which themes are more likely to support meaningful outcomes.

For example, AI may identify that one topic cluster consistently performs well among a certain audience while another has historically generated weak engagement despite strong internal enthusiasm. It may show that one subject tends to support qualified conversions while another drives traffic with little downstream value. These signals help businesses avoid investing equally in all ideas and instead focus on themes more likely to create measurable impact.

This does not mean AI should replace editorial strategy or subject-matter expertise. It means those decisions can be supported by better evidence. Topic planning becomes less about guesswork and more about choosing opportunities with a stronger probability of success. That can significantly improve efficiency, especially for organizations with limited content production capacity.

AI Helps Refine Content Before Publication

Predictive AI is not only useful for deciding whether content should be published. It is also valuable for improving the content before it goes live. If the model identifies likely weaknesses, teams can make targeted changes rather than waiting to learn through underperformance later. A draft may need a clearer title structure, stronger summary, better metadata, tighter topical focus, or improved alignment with the intended audience. AI can help surface these areas early, giving editors a better chance to strengthen the asset before launch.

This creates a much more proactive content workflow. Instead of publishing first and correcting later, teams can treat prediction as an early feedback layer. A content strategist may revise a topic angle. An editor may improve structural clarity. A marketer may adjust metadata or supporting calls to action. These improvements can often be made quickly, but they are much easier to prioritize when AI highlights which elements are most likely to affect performance.

This also improves resource efficiency. Teams do not need to over-edit everything equally. They can focus their effort where the predictive signals suggest it matters most. In high-volume environments, that makes a major difference because it allows quality improvements without turning every asset into an endless review cycle.

Predictive Signals Improve Editorial Prioritization

Editorial teams are often faced with more work than they can realistically publish or optimize at once. Predictive signals can help solve this by improving prioritization. If a business has multiple drafts in progress, AI can help identify which ones are more likely to perform well, which may need more work, and which may have lower potential in their current form. This allows teams to make more informed scheduling and resource decisions before content goes live.

For example, a high-potential asset may be moved forward in the production queue because it aligns well with successful historical patterns. A lower-confidence draft may still be published, but perhaps in a different channel or after further refinement. Another piece may be deprioritized entirely because the predicted value does not justify immediate production effort. These are practical editorial decisions that become much easier when supported by structured predictive analysis.

This does not remove the role of experimentation or business judgment. There will always be cases where a strategically important topic deserves publication even if predicted performance is uncertain. The key benefit is that teams can distinguish those intentional exceptions from routine planning. That makes editorial prioritization more strategic and less driven by volume or internal pressure alone.